Yesterday while leaving the LA Photo exhibition in Santa Monica — a kind of catch-all retail event of photography through the commercial curatorial world of private galleries — I happened across a small scrum of people with anodized extruded rectangles holding them close to bush leaves, flowers and tiny bits of dirt on the ground. Lytro was in town somehow — or stalking about doing a bit of half-assed DIY guerrilla marketing.

There. I’m a Lytro hater. And maybe I’m getting old and cranky and beginning to catch myself thinkign — “I just don’t understand what kids are up to these days..” That’s a sign of something, I suppose. Oftentimes I can riddle it through and understand, even if I wouldn’t do the “whatever it is” myself.

Nevertheless, I don’t understand what Lytro‘s doing. Let me try and riddle it through.

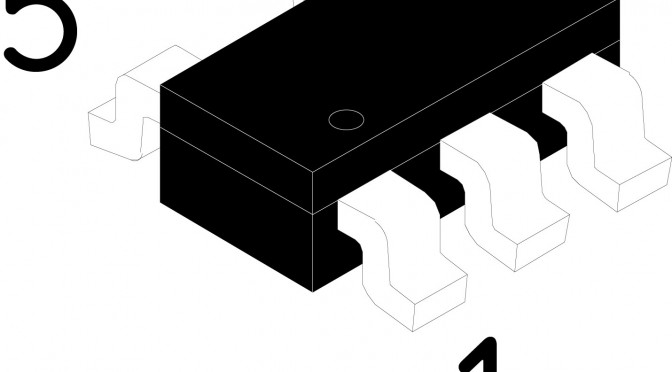

For those of you, unlike me, who don’t scour the networks for any sign or hint of an evolution in photography and image making generally, you may not know about Lytro’s weirdly optimistic talk about “light field imaging” techniques that is meant to revolutionize photography.

Well, this is it. Effectively, a proper bit of patent gold that allows one to capture a light field (their stoopid way of basically saying “image” or “photograph”) and derive the path of every light ray in such a way that you can focus *after you’ve captured your light field. What that means practically is that you never have to worry about focus ever again, and you can recompose the focus point forever afterwards. So — all that lovely, soft, bokeh (nez depth of field) that has come to mean “professional” photography because you previously could only get nice, lovely, soft depth of field with an expensive, “fast” lens and a big sensor? Well — now you can walk around with an anodized extruded rectangular tube and get it as well. It’ll cost you a bit less than that fast lens would’ve, and you get all the advantages of touching a little postage stamp sized screen to control the camera, and you can run your finger along a side of the rectangle to access zoom controls, and — best of all — you can shove the extruded rectangle at your friends and capture *their light field.

Seriously though — if I were to do a less snarky critique, I’d say that they a few things all turned around here.

First, they missed a serious opportunity to play up on the apparent fascination with analog, or retro-analog, or analog-done-digital. People seem to be in love with cameras that are digital, but harken back clearly to pre-digital photography. I’m talking about the industrial design mostly — but cameras like the Fuji X100 are beautiful, digital and, in their form, signal image-making/image-taking. Things like Instagram filters — whatever you may think about them — signal back to the vagaries and delights of analog film chemistry and the fun of processing in the dark room to achieve specific tonal and visual styles. There’s something about the analog that’s come back. That’s a thing. Perhaps its digital getting more thoughtful or poetic or nostalgic and then we’ll move onto a new, new comfort zone with our gizmos and gadgets and they’ll become less fetish things than lovely little ways to capture and share our lives with pleasing accents and visual stylings. Pixel-perfect will mean something else. Roughness and grit will be an aesthetic.

The extruded rounded rectangle isn’t bad, but it’s not so much camera as it is telescope. And if it’s signaling telescope, I’ll want to hold the thing up flush to my eyebeall, like a pirate or sea captain. And that’s fun as well. More fun, I’d suggest, than holding it out like I was getting ready to chuck a spear at someone.

The fact that I have to hold it several inches so I can pull focus on the display? Well, that’s several inches away from my subject and that little physical alignment schema of photographer —> intrusive-object —> subject is a bad set up. It ruins the intimacy of imaging making. I think that’s well-appreciated if thoroughly ignored aspect of the history of the camera design that the viewfinder makes a difference in the aesthetic and compositional outcome of picture taking. That’s a little bit of lovely, low-hanging fruit in the IxD possibilities for the future of image-making. It’s less a technology-feature, than a behavior feature that can be enabled by some thoughtful collaboration amongst design+technology.

The posture some folks take now of holding their camera out at nearly arms length to compose using the LCD screen on the back of many cameras? That’s bad photography form. You’re taking an image of what your eye sees, not what your camera sees. The intrusion of the visual surround that your peripheral vision naturally takes in when you don’t compose with your eye up to the viewfinder changes the way you compose and how you compose. I’m not saying there are rules, but there are better practices for the rituals of photography that lead to better photography and better photographers. Leastways — that’s what I think. It’s why I prefer an SLR or a rangefinder over a little consumer camera with no viewfinder, or a gesture to the viewfinder that’s barely usable.

You should try taking an image using the viewfinder if your camera has one and then never turn back to the LCD. Use the LCD for image sharing — that’s fine. Or for checking your exposure — that’s awesome and maybe one of the best advantages of the LCD. But to compose using the LCD, you’ve effectively lost the advance that the viewfinder brought to photography, which is to compose the view and do so in a way that makes that composition intimate to the photographers eye. Everything around is removed and blocked out. There are no visual distractions. What you see is basically what you get. (Some viewfinders don’t have 100% coverage, but they are typically quite close.) When the consumer camera manufacturers introduced thin cameras they had to do away with all the optics that allowed the image coming through the lens to do a couple of bends and then go to the photographers eye. And, anyway — all that is extra material, weight, glass, etc. So people started taking photographs by, ironically, moving the camera further away from themselves forever changing photography.

Well, that’s okay. Things change. I like looking through a viewfinder and grouse whenever I see people not using their viewfinder. And, I suppose I don’t use one many times when taking snaps with the happy-snappy or the camera on my phone. Whatever.

The point is that Lytro missed a fab opportunity to redo that compositional gaff that a dozen years of consumer electronics innovation dismissed out of hand.

That’s the Industrial Design gaff. There’s more.

Then there’s the interface. To *zoom you slide your finger left-and-right along an invisible bit of touch-sensitive zone on the gray plastic-rubber-y bit on the near end of the extruded tubular rectangle. Like..what? Okay — I know we’re all into touch, so Lytro can be forgiven for that. But — hold on? Isn’t zoom like..bring it closer; move it further away? Shouldn’t that be sliding towards me or away from me? Or, wait — I get it. The zoom gesture people may be used to is the circular turning of a traditional glass lens. Zoom out by turning clockwise. Zoom in by turning counter-clockwise. Well here I guess you’re sort of turning from the top of the barrel/rectangle — only you’re not turning, you’re finger-sliding left and right. So, I have no idea how this one came about. While a mechanical interface of some sort was probably not considered practical given the production requirements, tooling, integration and all that — I think this begs for either a telescoping zoom feature, or a mechanical rotating zoom feature. At a minimum, a rotating gesture or a pull-in/pull-out gesture if they’re all hopped up on virtual interfaces mimicking their precedents using things like capacitive touch.

Me? I’ve been into manual focus lately. It’s a good, fun, creative challenge. And even manual exposure control. Not to be nostalgic and old-school-y — it’s just fun, especially when you get it right. (Have I game-ified photography? N’ach.) Now with Lytro, the fact that I can focus forever after I’ve taken the image means I’ve now introduced a shit-ton of extra stuff I’ll end up doing after I taken the image, as if I don’t already have a shit-ton of extra stuff I end up doing because the “tools” that were supposed to make things easier (they do, sorta) allow me to do a shit-ton of extra stuff that I inevitably end up doing just cause the tools say I can. And now there’ll be more? Fab.

And further related to the interface is the fact that they introduced a new dilemma — how to view the image. Just as we got quite comfortable with our browsers being able to see images and videos without having to download and install whacky plug-ins, Lytro reverses all that. Because the Lytro light field image is weird, it’s not a JPEG or something so browsers and image viewers have no idea how to show the data unless you tell them how — by installing something/installing/maintaining else, which isn’t cool.

And now I suspect we’ll see a world of images where people are trying to do Lytro-y things like stand in close to squirrels so you can fuck around with the focus and be, like..oooOOooh..cool.

I don’t want to be cranky and crotchity about it, but I take a bit of pride in composing and developing the technical-creative skills to have a good idea as to what my image is going to look like based on aperture and shutter speed and all that. I know Lytro is coming from a good place. They have some cool technology and, like..what do you do if you developed cool technology at Stanford? You spin it off and assume the rest of the world *has to want it, even if it is just a gimmick disguised as a whole camera. Really, this should just be a little twiddle feature of a proper camera, at best — not a camera itself. It’s the classic technologist-engineer-inventor-genius knee-jerk reaction to come up with a fancy new gizmo-y gimmick that looks a bit like a door knob and then put a whole house around it and then say — “hey, check it out! i’ve reinvented the house!”

*shrug.

Why do I blog this? Cause I get frustrated when engineer-oriented folks try to design things without thinking about the history, legacy, existing interaction rituals, behaviors and relevancy to normal humans and basically make things for themselves, which is fine — but then don’t think for a minute about the world outside of the square mile around Palo Alto. It could be so much better if ideas like this were workshopped, evolved, developed to understand in a more complete way what “light field imaging” could be besides something that claims camera-ness in a shitbox form-factor with an objectionable sharing ritual and (probably — all indications suggest as much) a pathetic resolution/mega-pixel count.